Artificial intelligence is profoundly transforming organizations by offering powerful levers for automation, decision support, and innovation.

However, its deployment also raises new technical, ethical, legal, and organizational risks that must be anticipated.

AI risks concern all situations where automated systems may produce undesirable, biased, or non-compliant effects.

Risks associated with AI emerge at all stages of a system’s life cycle: design, training, deployment, and use.

They affect:

The challenge is to ensure reliable, explainable, and ethical use of AI technologies.

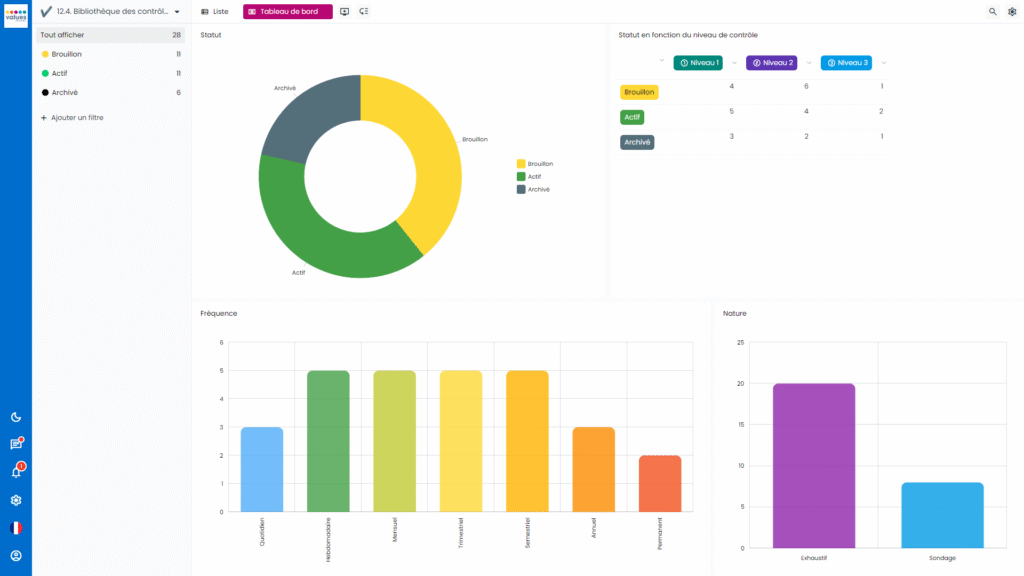

Values Associates software supports organizations in managing risks related to artificial intelligence.

Centralize data and assess risks at every stage of the AI project life cycle

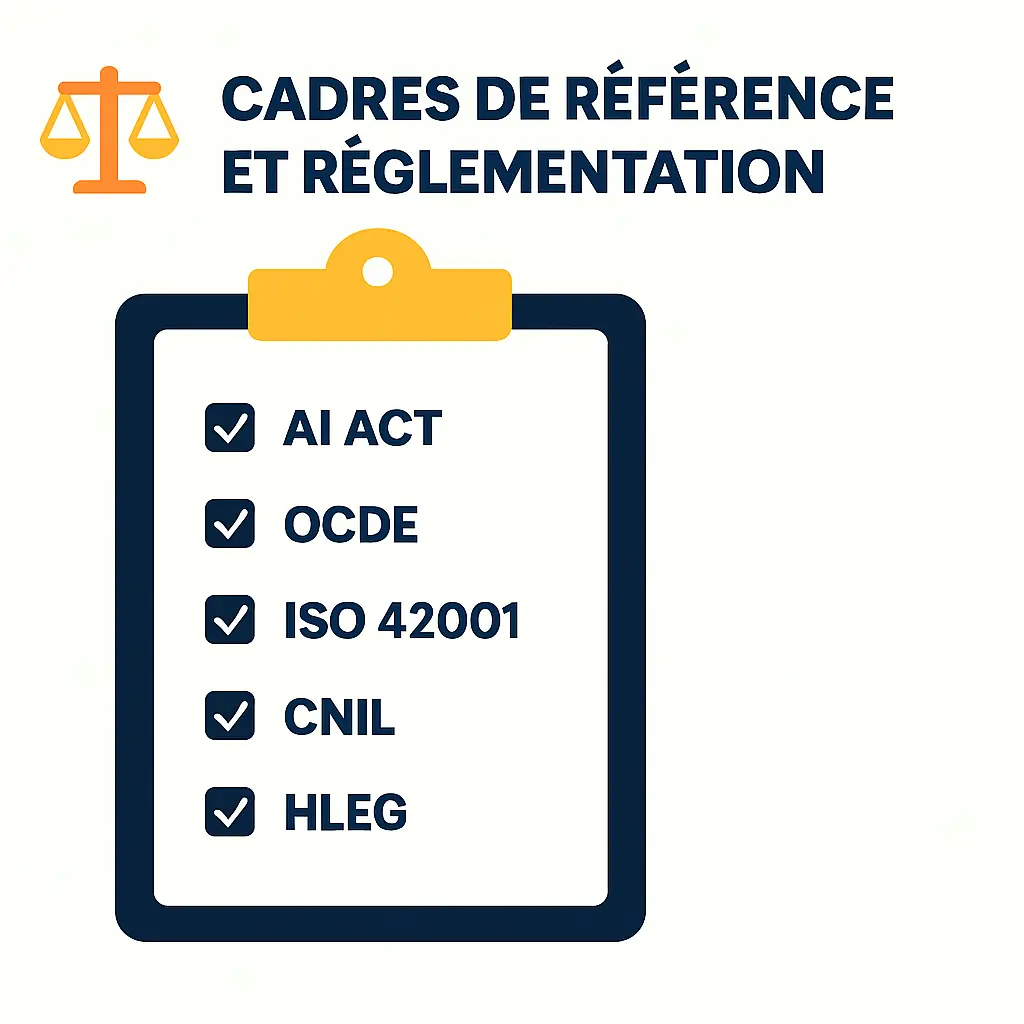

Ensure compliance with regulatory frameworks (AI Act, ISO/IEC 42001, CNIL, OECD)

Strengthen governance and traceability of models within the organization.

Emerging frameworks around AI aim to ensure safe and responsible development:

These frameworks provide a foundation for deploying reliable, explainable, and compliant artificial intelligence solutions.

To master risks related to artificial intelligence:

AI risks go beyond the simple technical dimension: they affect governance, ethics, and trust.

Anticipating them fosters responsible and sustainable innovation, in line with organizational values and obligations.

Values Associates risk management software is part of this approach, offering a global method to monitor, document, and secure artificial intelligence projects.

AI risks cover algorithmic bias, loss of control, privacy violations, misinformation, and non-compliance with regulatory frameworks such as the future European AI Act.

An assessment allows for the anticipation of ethical, legal, and organizational impacts before deployment, ensuring compliant and responsible use of the technology.

The AI Act is the future European regulation that classifies artificial intelligence systems according to their risk level (minimal, limited, high, unacceptable) and imposes specific compliance and transparency obligations.

Organizations must adopt clear governance, document the models used, conduct regular audits, and raise team awareness regarding ethics and bias.

The Values Associates solution allows for risk assessment at every stage of the AI life cycle, ensuring compliance with regulatory frameworks and strengthening trust in automated processes.